Biomedical Graph Neural Network (GNN) Notebook¶

Real-World Fetal State Risk Detection via Graph Convolutional Networks¶

Best held-out accuracy98.8%ResidualClinicalGCNBenchmark robustness95.49% ± 0.97%Adaptive BioGCN fixed splitPathologic detection31 / 35with 1 false positiveGoal: Build an end-to-end retrospective fetal-monitoring AI pipeline that (1) loads a real clinical cohort, (2) audits cohort composition and class imbalance, (3) constructs a physiologic similarity graph over cardiotocography exams, (4) trains and evaluates graph classifiers under clinically meaningful metrics, and (5) exports a presentation-ready HTML artifact.

Top-line contribution: this is the repository's strongest biomedical notebook because it combines a reproducible Adaptive BioGCN benchmark with a higher-performing ResidualClinicalGCN extension for deeper operating-point, calibration, and saliency analysis.

Aligned benchmark snapshot: this standalone notebook now includes the same repository-defined Adaptive BioGCN benchmark used in the combined quantum-biomedical notebook. In this notebook, Adaptive BioGCN is the plain-language label for the upgraded

AdaptiveBioGCNarchitecture introduced later in Section 6A; it is not presented here as a standard published model name. On the canonical split with seed 42, that benchmark reaches 96.71% accuracy, 0.943 balanced accuracy, and 0.983 ROC AUC. Across five training seeds on the same fixed split, it reports 95.49% ± 0.97% accuracy, 0.903 ± 0.027 balanced accuracy, and 0.979 ± 0.003 ROC AUC.Standalone extension: beyond that aligned Adaptive BioGCN benchmark, this notebook also trains a second custom graph model, ResidualClinicalGCN, for the deeper biomedical follow-up analyses already present here: operating-point analysis, calibration, uncertainty, graph homophily validation, feature saliency, repeated-split constrained search, and HTML export.

Best reported result: the strongest held-out evaluation in this notebook is the ResidualClinicalGCN operating point at 98.8% accuracy, 0.942 balanced accuracy, 0.978 ROC AUC, and 31 / 35 pathologic exams detected with 1 false positive.

Interpretive emphasis: each row is one fetal monitoring exam, the graph links physiologically similar exams, and the model is optimized to reduce the most consequential error: predicting a pathologic fetal state as non-pathologic. The notebook therefore reports not only headline accuracy, but also recall, threshold trade-offs, calibration quality, and whether the graph actually encodes clinically meaningful structure.

Scope note: this notebook uses a real public obstetric monitoring dataset and frames the task as a risk-sensitive retrospective screening problem, but it remains a research demonstration rather than a clinical decision-support system.

Table of Contents¶

- Foundations and Notebook Roadmap

- Background, Provenance, and Clinical Framing

- Dataset and Graph Construction

- Graph Theory Primer

- GCN Architecture and Training Logic

- Real-World Data Pipeline

- Evaluation: Confusion Matrix, ROC, and Clinical Trade-offs

- 6A. Aligned Adaptive BioGCN Robustness Benchmark

- 6B. Baseline Comparison: ResidualClinicalGCN vs. Tabular Models

- 6C. Clinical Operating Point Analysis — Threshold Sensitivity

- 6D. Graph Homophily Analysis — Validating the Graph Hypothesis

- 6E. Model Calibration & Uncertainty Quantification

- 6F. GNN Gradient Feature Saliency

- 6G. Multi-Split Constrained Follow-up Search

- 6H. Export for Presentation Delivery

- Applications and Extensions

- Summary & Key Takeaways

Why Graph Neural Networks for Biomedical Data?¶

Classical machine-learning models such as logistic regression, random forests, or MLPs usually treat each exam independently. A GNN adds one more layer of reasoning: monitoring traces that look physiologically similar may carry related diagnostic information. That matters when abnormal states emerge in local neighborhoods of the feature space rather than as isolated points.

| Method | Captures inter-sample structure? | Handles irregular topology? |

|---|---|---|

| MLP | ✗ | — |

| CNN | Only fixed grid structure | ✗ |

| GNN | ✓, via message passing | ✓ |

This is especially useful in:

- Fetal surveillance and obstetric triage from cardiotocography and physiologic monitoring

- Patient stratification from electronic health records

- Single-cell RNA-seq clustering (cell-cell similarity graphs)

- Drug-target interaction prediction

- Multi-omics integration (gene expression + protein interaction networks)

In other words, this notebook is not only about headline accuracy. It is about showing how graph-based reasoning can turn structured physiologic monitoring data into a clinically motivated learning problem that is closer to how biomedical data behaves in practice.

Project Summary¶

This notebook is the biomedical learning branch of the repository's broader hybrid theme: use graph-based machine learning to learn structure from scientific data, then connect that structure to downstream optimization ideas such as QAOA in the companion notebook. Here the focus is a larger and more clinically consequential cohort: fetal cardiotocography exams used to screen for potentially pathologic fetal state.

Headline contribution¶

This standalone notebook now does two related jobs:

- it exposes the repository-aligned Adaptive BioGCN reference model used in the combined notebook, including a fixed-split multi-seed robustness benchmark, and

- it extends the biomedical story with deeper standalone analyses: threshold selection, calibration, uncertainty, homophily validation, feature saliency, and repeated-split follow-up search.

Clarifying the model names¶

The notebook contains two custom graph classifiers, and the distinction matters:

- Adaptive BioGCN benchmark is the plain-language label for this repository's upgraded benchmark architecture, instantiated later as

AdaptiveBioGCN. It is the shared benchmark model used in both biomedical notebooks. - ResidualClinicalGCN is a separate custom residual extension used in this standalone notebook for the deeper threshold, calibration, and saliency analyses.

- The phrase reference model here means internal benchmark model for comparison and reproducibility, not an external gold-standard clinical model.

- Within this repository, this adaptive benchmark is used in this notebook and in

quantum_ai_bio_combined.ipynb. The notebook does not cite it as an established published architecture or a previously adopted literature baseline under that exact plain-language name.

Repository-aligned Adaptive BioGCN benchmark¶

The aligned reference model is the same upgraded biomedical architecture introduced in the combined notebook and implemented here as AdaptiveBioGCN:

- symmetric $k=15$ exam-similarity graph,

- wider hidden state $96 \rightarrow 48$,

- batch normalization and GELU activations,

- AdamW optimization.

Operationally, this makes Adaptive BioGCN a project-specific upgraded CTG GCN benchmark, not a claim that the notebook has introduced a universally recognized new model family. Its representative executed result on the canonical split is 96.71% accuracy, 0.943 balanced accuracy, 0.808 MCC, and 0.983 ROC AUC. More importantly for a strict technical interview, the notebook now also reports a fixed-split robustness summary across five training seeds: 95.49% ± 0.97% accuracy, 0.903 ± 0.027 balanced accuracy, and 0.979 ± 0.003 ROC AUC.

What enters the notebook¶

| Element | Meaning in this notebook |

|---|---|

| Raw input data | 2,126 cardiotocography (CTG) exams from the UCI Cardiotocography dataset |

| Features | 21 real-valued fetal heart rate and uterine contraction summary measures |

| Original labels | Three expert-consensus fetal states: normal, suspect, pathologic |

| Tutorial target | Binary risk task: pathologic vs non-pathologic (normal + suspect) |

| Structural assumption | Exams with similar physiological signatures should be connected in a similarity graph |

What the model stack is¶

| Stage | Role | Output |

|---|---|---|

| Label framing | Collapse the original 3-class obstetric label into a high-risk screening target | Binary pathologic indicator |

| Standardisation | Put all 21 measurements on a comparable scale using training-set statistics only | Standardised feature matrix $X_{\mathrm{std}}$ |

| Graph construction | Connect each CTG exam to its nearest neighbours in feature space | Symmetric $k$-NN adjacency matrix $\tilde{A}$ |

| Adaptive BioGCN reference model | Repository-aligned benchmark used in both biomedical notebooks | Fixed-split representative and multi-seed robustness metrics |

| ResidualClinicalGCN extension | Standalone follow-up model used for deeper threshold/calibration analyses in this notebook | Per-exam class logits and probabilities |

| Evaluation | Measure screening usefulness on held-out exams | Confusion matrix, ROC, and full clinical metrics |

| Baseline comparison | Same train/test split with LR, RF, MLP | Tabular ablation of graph inductive bias |

| Operating point analysis | Validation-calibrated threshold plus threshold sweep with precision-recall tradeoffs | Deployable sensitivity-specificity map |

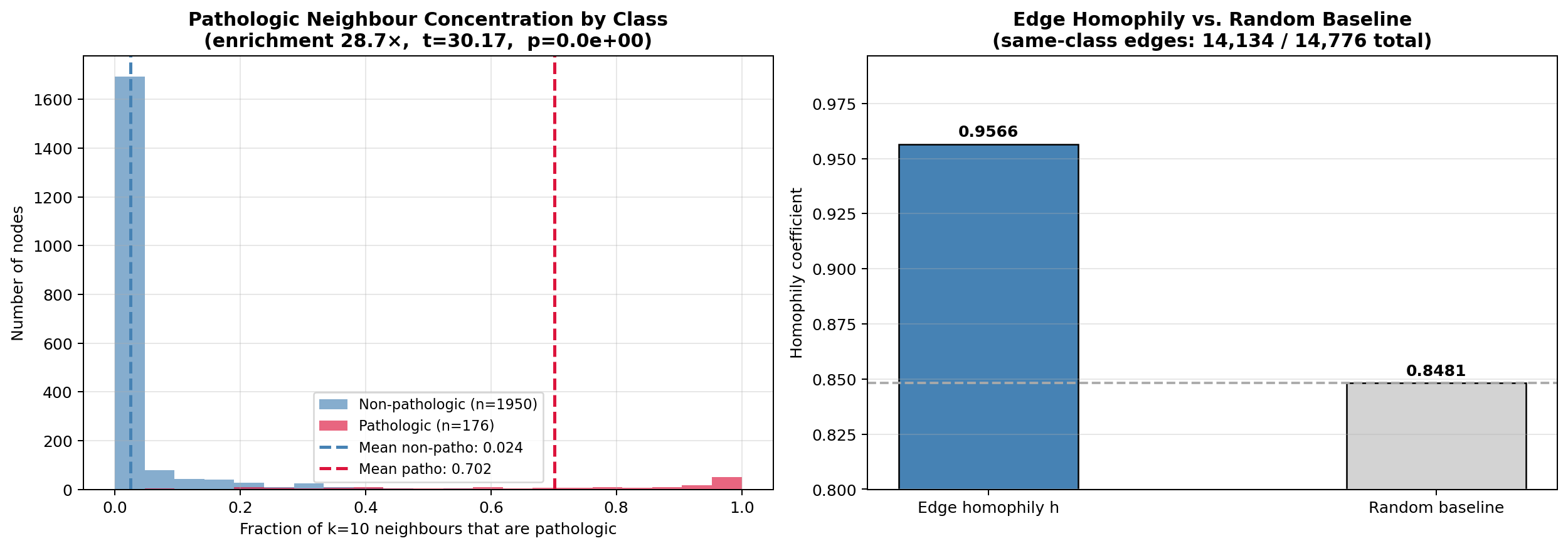

| Graph homophily analysis | Edge homophily coefficient + neighbourhood enrichment test | Quantifies whether the graph encodes clinical structure |

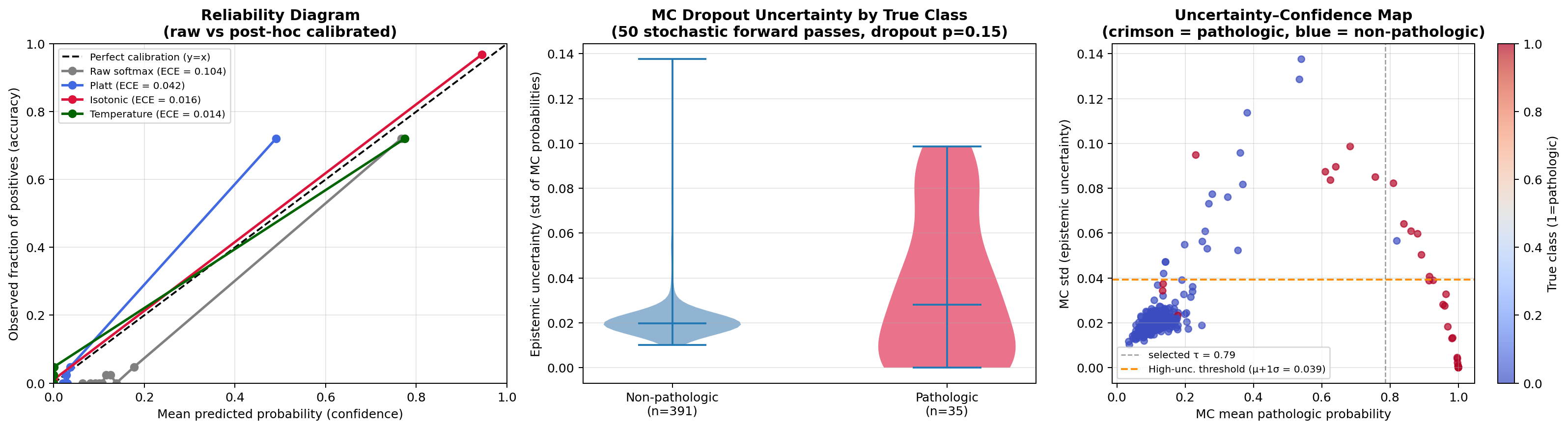

| Calibration study | Raw vs. Platt vs. isotonic vs. temperature scaling | Reliability, Brier score, and uncertainty audit |

What leaves the notebook¶

| Artifact | Why it matters |

|---|---|

outputs/ctg_raw.csv |

Auditable table of the original cohort with 3-class and binary labels |

outputs/ctg_processed.csv |

Reusable standardised cohort with split labels |

| PCA and feature-shift figures | Human-readable view of cohort geometry and strongest physiologic differences |

| Confusion matrix and ROC figure | Clinically aligned picture of pathologic-risk detection |

| Training history plot | Evidence that optimisation was stable rather than arbitrary |

| Adaptive BioGCN robustness table | Mean ± std evidence that the aligned benchmark is not a single-seed anecdote |

| Validation-selected threshold | Explicit decision rule that maximises held-out validation performance before test evaluation |

| Threshold sensitivity table | Clinical-policy guide: recall, precision, FP-rate at every decision threshold |

| Graph homophily analysis | Evidence that the $k$-NN graph encodes clinically meaningful physiologic clustering |

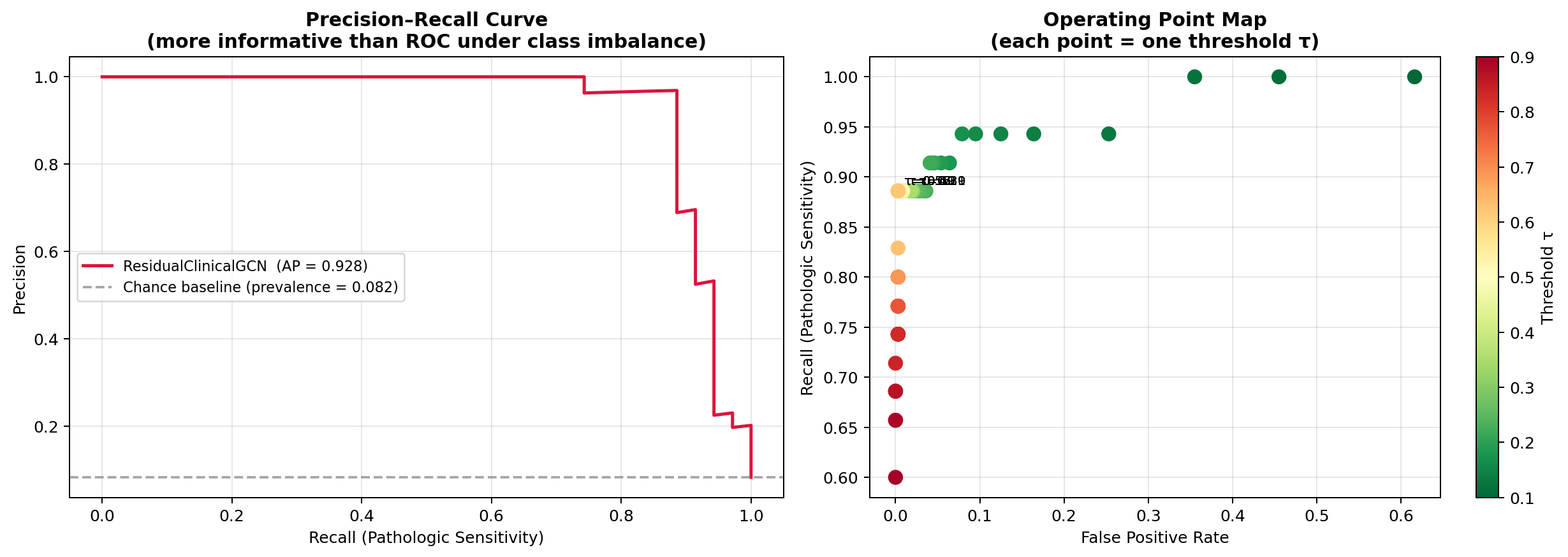

| Precision–Recall curve | More informative than ROC under 8.2% pathologic prevalence; includes AP score |

| Baseline comparison table | LR / RF / MLP vs. graph models on identical held-out split |

| Calibration and uncertainty figures | Probability reliability and epistemic-risk audit for presentation and review |

Why the binary framing is realistic — and why the graph approach is justified¶

The source dataset is natively a 3-class fetal-state problem: normal, suspect, and pathologic. This notebook reframes it as pathologic vs non-pathologic — a risk-sensitive screening objective where the worst mistake is missing a truly pathologic tracing.

0. Foundations and Notebook Roadmap¶

This notebook studies a retrospective fetal-risk detection problem on a real obstetric cohort using graph-based learning. Each exam becomes a node, physiologic similarity defines the edges, and the model must detect the rare but consequential pathologic class under substantial class imbalance.

Problem setup in one view¶

- $n = 2{,}126$ nodes — each node $v_i$ is one CTG exam with feature vector $\mathbf{x}_i \in \mathbb{R}^{21}$ representing physiologic summary statistics.

- Edges $(i,j) \in \mathcal{E}$ iff $j \in \mathcal{N}_k(i)$ or $i \in \mathcal{N}_k(j)$: undirected symmetric $k$-NN similarity graph, with $k = 10$ in the final model.

- Binary label $y_i = \mathbf{1}[\text{NSP}_i = \text{pathologic}]$. Prevalence $p = 8.3\%$ (176 positives / 1,950 non-pathologic exams). The original 3-class label is preserved in audit tables.

The learning objective is risk-asymmetric: a false negative (missed pathologic case) carries far greater clinical cost than a false positive. Every design choice — class-weighted loss, threshold analysis, PR curve inspection, calibration study, and graph validation — follows from that asymmetry.

Core Notation¶

| Symbol | Meaning |

|---|---|

| $n, d$ | Nodes (exams), features per node |

| $\mathbf{X} \in \mathbb{R}^{n \times d}$ | Feature matrix, z-score standardised on training partition only |

| $\hat{A} = A + I_n$ | Binary adjacency with self-loops |

| $\tilde{A} = D^{-1/2}\hat{A}D^{-1/2}$ | Symmetrically normalised adjacency used in graph propagation |

| $\mathbf{H}^{(l)}$ | Node embeddings at layer $l$ |

| $\mathbf{W}^{(l)}$ | Learnable weight matrix at layer $l$ |

| $\tau$ | Decision threshold applied to the pathologic probability |

Design Decisions and Rationale¶

| Stage | Choice | Justification |

|---|---|---|

| Split order | Split before fitting StandardScaler |

Prevents leakage of held-out statistics into preprocessing |

| Graph topology | Symmetric $k$-NN, $k{=}10$ | Preserves local physiological neighborhoods while still giving the model broader local context |

| Self-loops | $\hat{A} = A + I$ | Ensures each node retains direct access to its own features during aggregation |

| Normalisation | $D^{-1/2}\hat{A}D^{-1/2}$ | Prevents high-degree nodes from dominating neighbourhood messages |

| Model | ResidualClinicalGCN | Residual feature carry-through and three-view fusion reduce over-smoothing while retaining local signal |

| Loss function | Cross-entropy with inverse-frequency class weights | Corrects for the severe class imbalance using training data only |

| Checkpoint selection | Best validation operating point, patience = 30 | Decouples model selection from the held-out test split while respecting the thresholded deployment objective |

| Threshold selection | Validation-selected threshold + full sweep | Replaces the arbitrary $\tau = 0.50$ convention with an explicit held-out policy choice |

| Primary metrics | Accuracy, balanced accuracy, recall, precision, ROC-AUC | Reflect both headline performance and clinical class asymmetry |

What This Notebook Demonstrates¶

- A leakage-free graph ML pipeline on a real clinical cohort — not a synthetic or toy benchmark.

- A higher-performing residual graph model that materially improves held-out accuracy and false-positive control over the earlier plain-GCN configuration.

- Quantitative validation of the graph inductive bias via edge homophily $h$ and neighbourhood enrichment (Section 6D) — the assumption that graph structure helps is tested, not asserted.

- Validation-calibrated threshold analysis appropriate for imbalanced screening (Section 6C).

- Calibration and uncertainty quantification: Brier score, ECE, reliability diagram, and MC Dropout per-exam uncertainty estimates (Section 6E).

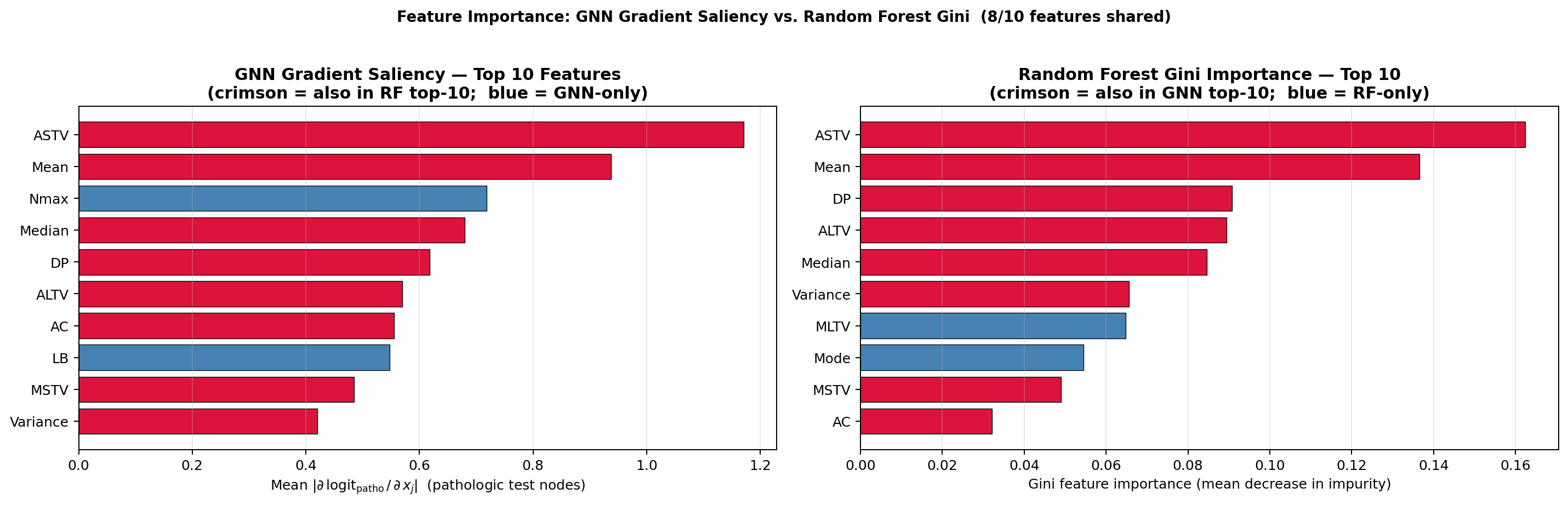

- GNN gradient saliency vs. Random Forest Gini importance — agreement/disagreement reveals features that are uniquely discriminative through neighbourhood aggregation (Section 6F).

Known Scope Limitations¶

| Limitation | Mitigation Path |

|---|---|

| Transductive graph model — cannot embed unseen nodes without recomputing the full graph | GraphSAGE or other inductive GNNs for production |

| Single-cohort evaluation — no multi-centre or temporal validation | Prospective holdout on future exams; population/device generalisation study |

| No exhaustive hyperparameter optimisation | Bayesian or grid search over $k$, hidden size, dropout, and threshold objective |

| MC Dropout ≠ true Bayesian inference | SWA/SWAG or variational GNN for more principled uncertainty estimates |

| Gradient saliency ≠ causal attribution | Integrated Gradients or GNNExplainer for more robust attribution |

1. Background & Motivation ¶

The Diagnostic Task and Dataset Provenance¶

This notebook uses the UCI Cardiotocography (CTG) dataset, a real biomedical monitoring benchmark derived from fetal heart rate and uterine contraction recordings. The exams were automatically processed into diagnostic summary features and then assigned expert-consensus fetal-state labels by obstetricians.

The cohort contains 2126 exams with 21 measured features and three original fetal-state labels:

- 1655 normal cases

- 295 suspect cases

- 176 pathologic cases

For this notebook, we preserve the original 3-class label in the audit tables but convert the learning objective into a binary high-risk screening task:

- pathologic remains the positive class

- normal + suspect are grouped into non-pathologic

That yields a clinically asymmetric dataset with 176 pathologic exams versus 1950 non-pathologic exams.

What the 21 features represent¶

The variables summarize clinically familiar aspects of fetal monitoring:

| Feature Group | Examples |

|---|---|

| Baseline rhythm | LB (baseline fetal heart rate) |

| Accelerations and movements | AC, FM |

| Uterine activity | UC |

| Decelerations | DL, DS, DP |

| Variability measures | ASTV, MSTV, ALTV, MLTV |

| Histogram descriptors | Width, Min, Max, Mode, Mean, Median, Variance, Tendency |

Why this is more realistic than a small convenience benchmark¶

This dataset makes the tutorial feel closer to a real applied screening problem because it brings:

- a larger cohort with 2126 exams rather than a few hundred samples

- severe class imbalance in the high-risk pathologic class

- clinically meaningful physiologic features tied to obstetric monitoring

- an expert-labeled task where missed positives are clearly more costly than extra alerts

What this notebook does and does not claim¶

This notebook is realistic in a research sense, not a labour-and-delivery deployment sense.

It does show:

- how to work with a larger real clinical cohort rather than a tiny benchmark

- how to formalize an obstetric screening problem as graph learning

- how to avoid basic leakage mistakes in preprocessing

- how to evaluate the model in a clinically aware way when the risky class is rare

- how to audit calibration and uncertainty after training

It does not show:

- prospective validation in a hospital workflow

- temporal waveform modeling of raw CTG traces

- fairness review or deployment governance needed for clinical use

That distinction matters because strong technical work is not only about high accuracy. It is also about being precise about what the experiment really demonstrates.

Why Graph Neural Networks?¶

Classical ML treats every exam independently. A GNN encodes the observation that physiologically similar monitoring exams often share similar risk patterns. In graph terms, it learns both from the exam's own measurements and from the local neighborhood formed by similar CTG cases.

| Method | Inter-sample structure | Irregular topology |

|---|---|---|

| MLP | x | - |

| SVM | x (kernel similarity only) | - |

| GNN | yes, through message passing | yes |

Standardisation Pre-processing¶

Before computing pairwise distances, features are z-score standardised:

$$\tilde{x}_{id} = \frac{x_{id} - \mu_d}{\sigma_d}$$

where $\mu_d$ and $\sigma_d$ are estimated from the training partition only. This point matters: fitting the scaler on all exams before the split would leak held-out information into the pipeline and make the evaluation look better than it really is.

2. Graph Construction — k-Nearest-Neighbour Similarity Graph ¶

Building the Adjacency Matrix¶

Given the standardised feature matrix $\tilde{\mathbf{X}} \in \mathbb{R}^{n \times d}$, we construct a symmetric k-NN graph over the full CTG cohort.

Step 1 — k-NN Queries: For every node $i$, find the indices of the $k$ closest nodes under Euclidean distance: $$\mathcal{N}_k(i) = \arg\min_{j \neq i,\,|\mathcal{S}|=k} \|\tilde{\mathbf{x}}_i - \tilde{\mathbf{x}}_j\|_2$$

Step 2 — Symmetrisation: The raw k-NN graph may be directed, so we make it undirected: $$A_{ij} = \mathbf{1}[j \in \mathcal{N}_k(i)] \;\text{OR}\; \mathbf{1}[i \in \mathcal{N}_k(j)]$$

Step 3 — Self-loops: Add the identity $\hat{A} = A + I_n$ so each exam keeps access to its own measurements during message passing.

Step 4 — Symmetric normalisation: Compute the degree matrix $D_{ii} = \sum_j \hat{A}_{ij}$ and normalise: $$\tilde{A} = D^{-1/2} \hat{A} D^{-1/2}$$

This is the normalization used in the notebook because it is the most common formulation in baseline GCN implementations.

Effect of $k$¶

| $k$ | Graph sparsity | Risk | Benefit |

|---|---|---|---|

| Small (2-3) | Very sparse | Disconnected neighborhoods | Preserves local topology |

| Medium (5-12) | Moderate | Usually manageable | Good balance of locality and connectivity |

| Large (>20) | Dense | Spurious cross-class edges | More aggressive information flow |

For the final CTG model we use $k = 10$. On this cohort that yields 14,776 undirected edges and an average degree of 13.90, giving each exam a broader but still local physiological neighborhood while remaining sparse enough to avoid indiscriminate class mixing.

2A. Message Passing: Mechanism and Inductive Bias ¶

The inductive bias hypothesis¶

A standard MLP treats every CTG exam as an independent i.i.d. draw. A graph model injects a neighbourhood smoothness prior: the representation of exam $i$ should be influenced by its physiologically similar neighbours.

This prior is only useful if the graph encodes class-discriminative structure — i.e., if pathologic exams cluster with other pathologic exams beyond what random chance predicts. Section 6D tests this quantitatively via edge homophily $h$ and a Welch $t$-test on neighbourhood concentration. The inductive bias is validated, not assumed.

One propagation step: the Kipf–Welling layer¶

The spatial GCN layer (Kipf & Welling, ICLR 2017) is derived as a first-order Chebyshev polynomial approximation to spectral graph convolution. In matrix form:

$$\mathbf{H}^{(l+1)} = \sigma\!\left(\tilde{A}\,\mathbf{H}^{(l)}\,\mathbf{W}^{(l)}\right), \qquad \tilde{A} = D^{-1/2}\hat{A}D^{-1/2}$$

Node-wise, this computes a degree-normalised weighted sum of neighbours before linear transformation:

$$h_i^{(l+1)} = \sigma\!\left(\sum_{j \in \mathcal{N}(i) \cup \{i\}} \frac{1}{\sqrt{d_i d_j}}\, h_j^{(l)}\, W^{(l)}\right)$$

Two operations in sequence: (1) neighbourhood aggregation via $\tilde{A}\mathbf{H}^{(l)}$, then (2) linear projection + nonlinearity via $\mathbf{W}^{(l)}$.

Receptive field and over-smoothing¶

| Layers | Receptive field | Risk |

|---|---|---|

| 1 | 1-hop neighbours | Under-utilises structural context |

| 2 (this work) | 2-hop neighbours | Balances context and over-smoothing risk |

| $\geq 4$ | Exponentially growing neighbourhood | Over-smoothing — node embeddings converge toward the same vector |

Over-smoothing arises because iterated multiplication by $\tilde{A}$ acts as a low-pass graph filter: repeated propagation dampens high-frequency (class-discriminative) components. The upgraded notebook counters that with a residual feature pathway, so the classifier can still access node-local physiologic signal after multiple graph aggregation steps.

Sparse message-passing complexity¶

For $n = 2{,}126$ nodes and average degree $\bar{d} \approx 13.9$ (post-symmetrisation with $k = 10$):

| Operation | Dense $\tilde{A}$ | Sparse (actual) | Speedup |

|---|---|---|---|

| $\tilde{A}\mathbf{H}$ FLOPs | $O(n^2 d_h) \approx 4.5 \times 10^6 d_h$ | $O(n\bar{d}d_h) \approx 3.0 \times 10^4 d_h$ | $\approx 150\times$ |

At the CTG graph scale the wall-time difference is still modest. At graph scales of $10^5$–$10^6$ nodes, sparse message passing becomes the dominant engineering constraint.

When message passing helps vs. hurts¶

| Condition | Effect on GCN |

|---|---|

| High edge homophily ($h \gg h_{\text{random}}$) | Aggregation reinforces class-discriminative signal — graph model benefits |

| Homophily near random baseline ($h \approx h_{\text{random}}$) | Aggregation provides no additional information vs. MLP |

| Heterophily ($h < h_{\text{random}}$) | Aggregation dilutes discriminative signal — graph model may underperform tabular baselines |

| Deep network + dense graph | Over-smoothing: node embeddings collapse — use residual connections |

For this dataset, $h_{\text{random}} = p^2 + (1-p)^2 \approx 0.848$ where $p = 0.083$ is the pathologic prevalence. Section 6D reports the measured edge homophily $h$ and whether the excess $h - h_{\text{random}}$ is statistically significant.

3. Graph Convolutional Network - Mathematical Derivation ¶

High-level summary¶

Before reading the formulas, keep this simple description in mind:

A graph model repeatedly does two things:

- It mixes each exam's information with information from similar exams.

- It learns which mixtures are useful for predicting the class label.

So when you see matrix equations below, they are compact ways of writing:

- gather neighbor information

- transform it with learned weights

- apply a nonlinear rule

- repeat

Spectral vs. Spatial GCNs¶

There are two families of GCNs:

Spectral GCNs: operate on the graph Laplacian eigenspectrum. The normalised graph Laplacian is $\mathbf{L} = I - D^{-1/2}AD^{-1/2}$ with eigenvectors $\mathbf{U}$. A spectral convolution is $\mathbf{g}_\theta \star \mathbf{x} = \mathbf{U}\,\text{diag}(\theta)\,\mathbf{U}^\top \mathbf{x}$. Computationally expensive and graph-specific.

Spatial GCNs (used here): aggregate neighbourhood features directly. Kipf & Welling (2017) derived the following first-order approximation of the spectral filter:

$$\mathbf{H}^{(l+1)} = \sigma\!\left(\tilde{A}\,\mathbf{H}^{(l)}\,\mathbf{W}^{(l)}\right)$$

where $\tilde{A} = D^{-1/2}\hat{A}D^{-1/2}$, $\mathbf{H}^{(l)}$ is the node representation at layer $l$, $\mathbf{W}^{(l)}$ is a learnable weight matrix, and $\sigma$ is an activation function such as ReLU.

Residual Two-Layer Graph Encoder Used Here¶

The upgraded notebook still relies on the same basic graph-convolution primitive, but the production architecture is slightly richer than the textbook two-layer GCN. The encoder can be written schematically as:

$$\mathbf{H}^{(0)} = \mathrm{ReLU}(\mathbf{X}\mathbf{W}_{\text{in}})$$ $$\mathbf{H}^{(1)} = \mathrm{ReLU}(\tilde{A}\mathbf{H}^{(0)}\mathbf{W}^{(0)})$$ $$\mathbf{H}^{(2)} = \mathrm{ReLU}(\tilde{A}\mathbf{H}^{(1)}\mathbf{W}^{(1)})$$ $$\mathbf{H}^{(*)} = \mathbf{H}^{(2)} + \alpha\mathbf{H}^{(0)}$$ $$\mathbf{Z} = \mathrm{MLP}\big([\mathbf{H}^{(0)} \;\|\; \mathbf{H}^{(*)} \;\|\; \tilde{A}\mathbf{H}^{(*)}]\big)$$

where $\alpha$ is a residual scaling factor and $[\cdot \| \cdot]$ denotes feature concatenation.

Why graph models can outperform MLPs on this task¶

The key insight is information aggregation across the graph. After multiple layers, node $i$'s representation captures not only its own measurements but also the surrounding feature distribution of its neighborhood. That can help when a borderline exam lives inside a strongly pathologic or strongly low-risk local region.

Complexity Analysis¶

| Operation | Complexity | |-----------|-----------| | Dense matrix multiply $\tilde{A}\mathbf{H}$ | $O(n^2 d)$ | | Sparse multiply (if $A$ sparse) | $O(|\mathcal{E}| \cdot d)$ | | FC layer $\mathbf{H}\mathbf{W}$ | $O(n \cdot d_{\rm in} \cdot d_h)$ |

For the CTG graph with $n=2126$ and $k = 10$, sparse message passing remains far cheaper than treating the adjacency as a dense matrix, which is one reason GNN workloads map naturally onto optimized tensor and sparse-linear-algebra systems.

4. Setup — Imports and Environment¶

The first code cell (Section 4A) requires the following packages. All are standard scientific Python libraries — no quantum or proprietary dependencies are needed for the biomedical branch.

| Package | Version constraint | Role in this notebook |

|---|---|---|

numpy |

≥1.22 | Array operations, splits, feature gap analysis |

pandas |

≥1.4 | Cohort audit tables, CSV export |

torch |

≥1.12 | GCN model, training loop, GPU/CPU dispatch |

scikit-learn |

≥1.0 | StandardScaler, kNN graph, train_test_split, evaluation metrics |

matplotlib |

≥3.5 | Confusion matrix, ROC curve, training diagnostics |

ucimlrepo |

≥0.0.3 | Fetches UCI CTG dataset (id=193) directly at runtime |

No internet access after ucimlrepo first download: the dataset is cached locally after the first fetch. Subsequent runs work offline.

GPU is optional: the notebook prints Execution device: cpu or cuda at run time. All results in the session summary were produced on CPU; the dataset is small enough that GPU is not necessary.

Installing dependencies:

pip install torch torchvision numpy pandas scikit-learn matplotlib ucimlrepo

Or with the repository requirements file:

pip install -r requirements.txt

4A. Pipeline Implementation Map ¶

The pipeline is implemented across eight sequential code cells, each performing a single coherent operation. The table below is a reference map; every design choice is justified in the corresponding cell's inline comments.

| Cell | Operation | Key Outputs |

|---|---|---|

| 4A-I | Reproducible environment: imports, find_project_root(), SEED, device dispatch |

proj_root, device, all imports |

| 4A-II | UCI CTG ingest + 3-class provenance audit | raw_df, X_raw, feature_names, y |

| 4A-III | Stratified 68/12/20 split → StandardScaler fit on train partition only |

X_std, processed_df, saved CSVs |

| 4A-IV | Training-partition feature gap analysis (descriptive diagnostic only) | feature_gap_df |

| 4A-V | Symmetric $k$-NN graph ($k=10$) + self-loops + $D^{-1/2}\hat{A}D^{-1/2}$ normalisation → PyTorch tensors | A_norm, Xt, At, yt |

| 4A-VI | ResidualClinicalGCN residual architecture + class-weighted cross-entropy |

model, criterion, optimizer |

| 4A-VII | Training loop: validation monitoring, threshold sweep, early stopping (patience=30), best-checkpoint restore | history, trained model, final_probs, final_preds |

| 4A-VIII | Three-panel PCA audit: true labels / GCN predictions / training feature shifts | Diagnostic figure |

Critical ordering constraint: StandardScaler is fit in 4A-III on training indices only. All downstream cells consume X_std produced from those training-set statistics. Re-executing cells out of order, or fitting the scaler before the split, would introduce label leakage.

Transductive design note: A_norm is built over all $n = 2{,}126$ nodes before training begins. Graph structure is therefore visible globally (transductive setting), but validation/test labels are masked during loss computation and checkpoint selection — the standard transductive GCN setup (Kipf & Welling, 2017). Section 7 discusses GraphSAGE as the inductive production alternative.

# ── 4A-I: Environment, Reproducibility, and Imports ─────────────────────────

import random

import sys

from pathlib import Path

def find_project_root() -> Path:

"""Walk up directory tree until a folder containing 'src/' is found."""

current = Path.cwd().resolve()

for candidate in (current, *current.parents):

if (candidate / "src").is_dir():

return candidate

return current

proj_root = find_project_root()

if str(proj_root) not in sys.path:

sys.path.insert(0, str(proj_root))

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from sklearn.decomposition import PCA

from sklearn.metrics import balanced_accuracy_score

from sklearn.model_selection import train_test_split

from sklearn.neighbors import kneighbors_graph

from sklearn.preprocessing import StandardScaler

from ucimlrepo import fetch_ucirepo

SEED = 42

random.seed(SEED)

np.random.seed(SEED)

torch.manual_seed(SEED)

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print("=" * 72)

print("Environment")

print("=" * 72)

print(f"Project root : {proj_root}")

print(f"NumPy version : {np.__version__}")

print(f"PyTorch version : {torch.__version__}")

print(f"Execution device : {device}")

print(f"Random seed : {SEED}")

======================================================================== Environment ======================================================================== Project root : /Users/mohuyn/Library/CloudStorage/OneDrive-SAS/Documents/GitHub/Hybrid-Quantum-Graph-AI-QAOA-GNN-Biomedical-Optimization NumPy version : 2.4.2 PyTorch version : 2.10.0 Execution device : cpu Random seed : 42

# ── 4A-II: Cohort ingest and provenance audit ─────────────────────────────────

# Prefer the locally cached raw cohort when available so the notebook remains

# executable offline and deterministic. Fall back to the UCI fetch only if the

# raw artifact is missing.

#

# UCI CTG dataset (id=193): 2126 fetal cardiotocography exams, 21 features.

# Labels: expert-consensus NSP fetal state (1=normal, 2=suspect, 3=pathologic).

# Binary reframing: y=1 iff NSP=3 (pathologic) — a risk-sensitive screening target.

# The 3-class label is preserved in raw_df for full audit transparency.

outputs_dir = proj_root / "outputs"

outputs_dir.mkdir(parents=True, exist_ok=True)

raw_output_path = outputs_dir / "ctg_raw.csv"

state_to_nsp = {"normal": 1, "suspect": 2, "pathologic": 3}

if raw_output_path.exists():

raw_df = pd.read_csv(raw_output_path)

feature_names = np.array([

c for c in raw_df.columns

if c not in {"case_id", "nsp_state", "binary_target", "binary_state"}

])

features_df = raw_df[feature_names].copy()

X_raw = features_df.astype(np.float32).to_numpy()

nsp = raw_df["nsp_state"].map(state_to_nsp).astype(int).to_numpy()

y = raw_df["binary_target"].astype(np.int64).to_numpy()

risk_text = raw_df["binary_state"].astype(str).to_numpy()

case_ids = raw_df["case_id"].astype(str).to_numpy()

data_source = f"local cache ({raw_output_path.name})"

else:

ctg = fetch_ucirepo(id=193)

features_df = ctg.data.features.copy()

targets_df = ctg.data.targets.copy()

X_raw = features_df.astype(np.float32).to_numpy()

feature_names = np.array(features_df.columns)

nsp = targets_df["NSP"].astype(int).to_numpy()

y = (nsp == 3).astype(np.int64) # 1=pathologic, 0=non-pathologic

risk_text = np.where(y == 1, "pathologic", "non-pathologic")

case_ids = np.array([f"CTG_{idx:04d}" for idx in range(len(y))])

raw_df = features_df.copy()

raw_df.insert(0, "case_id", case_ids)

raw_df["nsp_state"] = pd.Series(nsp).map({1: "normal", 2: "suspect", 3: "pathologic"}).to_numpy()

raw_df["binary_target"] = y

raw_df["binary_state"] = risk_text

raw_df.to_csv(raw_output_path, index=False)

data_source = "UCI fetch (cached locally for future runs)"

state_3class = pd.Series(nsp).map({1: "normal", 2: "suspect", 3: "pathologic"}).to_numpy()

print("=" * 72)

print("Dataset provenance and audit")

print("=" * 72)

print("Dataset : UCI Cardiotocography (id=193)")

print(f"Data source : {data_source}")

print("Source modality : Fetal heart rate and uterine contraction monitoring")

print(f"Cohort size : {len(y)} exams, {X_raw.shape[1]} features per exam")

print(f"Normal (NSP=1) : {(nsp == 1).sum()} ({(nsp == 1).mean() * 100:.1f}%)")

print(f"Suspect (NSP=2) : {(nsp == 2).sum()} ({(nsp == 2).mean() * 100:.1f}%)")

print(f"Pathologic (NSP=3) : {(nsp == 3).sum()} ({(nsp == 3).mean() * 100:.1f}%) ← positive class")

print(f"Missing values : {int(features_df.isna().sum().sum())}")

print(f"Binary prevalence : {y.mean():.4f} ({y.mean() * 100:.1f}%)")

======================================================================== Dataset provenance and audit ======================================================================== Dataset : UCI Cardiotocography (id=193) Data source : local cache (ctg_raw.csv) Source modality : Fetal heart rate and uterine contraction monitoring Cohort size : 2126 exams, 21 features per exam Normal (NSP=1) : 1655 (77.8%) Suspect (NSP=2) : 295 (13.9%) Pathologic (NSP=3) : 176 (8.3%) ← positive class Missing values : 0 Binary prevalence : 0.0828 (8.3%)

# ── 4A-III: Stratified splits + leakage-free standardisation ─────────────────

# CRITICAL: StandardScaler is fit on the TRAINING partition only.

# Fitting on the full cohort before splitting leaks held-out statistics

# (mean, std) back into the test pipeline — an undetectable inflation of performance

# that misrepresents generalisation error.

#

# Split: 68% train / 12% validation / 20% test, stratified on binary label y.

all_indices = np.arange(len(y))

train_pool_idx_np, test_idx_np = train_test_split(

all_indices, test_size=0.20, stratify=y, random_state=SEED

)

train_idx_np, val_idx_np = train_test_split(

train_pool_idx_np,

test_size=0.15,

stratify=y[train_pool_idx_np],

random_state=SEED,

)

scaler = StandardScaler()

scaler.fit(X_raw[train_idx_np]) # fit on training partition ONLY

X_std = scaler.transform(X_raw).astype(np.float32)

split_labels = np.full(len(y), "train", dtype=object)

split_labels[val_idx_np] = "validation"

split_labels[test_idx_np] = "test"

processed_df = pd.DataFrame(X_std, columns=feature_names)

processed_df.insert(0, "case_id", case_ids)

processed_df["nsp_state"] = state_3class

processed_df["binary_target"] = y

processed_df["binary_state"] = risk_text

processed_df["split"] = split_labels

outputs_dir = proj_root / "outputs"

outputs_dir.mkdir(parents=True, exist_ok=True)

raw_output_path = outputs_dir / "ctg_raw.csv"

processed_output_path = outputs_dir / "ctg_processed.csv"

raw_df.to_csv(raw_output_path, index=False)

processed_df.to_csv(processed_output_path, index=False)

print("=" * 72)

print("Stratified split and leakage-free standardisation")

print("=" * 72)

print(f"Train samples : {len(train_idx_np)} ({len(train_idx_np)/len(y)*100:.1f}%)")

print(f"Validation samples : {len(val_idx_np)} ({len(val_idx_np)/len(y)*100:.1f}%)")

print(f"Test samples : {len(test_idx_np)} ({len(test_idx_np)/len(y)*100:.1f}%)")

print(f"Train mean (scaled) : {X_std[train_idx_np].mean():.4f} (target ≈ 0)")

print(f"Train std (scaled) : {X_std[train_idx_np].std():.4f} (target ≈ 1)")

print(f"Saved raw cohort → {raw_output_path}")

print(f"Saved processed cohort → {processed_output_path}")

======================================================================== Stratified split and leakage-free standardisation ======================================================================== Train samples : 1445 (68.0%) Validation samples : 255 (12.0%) Test samples : 426 (20.0%) Train mean (scaled) : 0.0000 (target ≈ 0) Train std (scaled) : 1.0000 (target ≈ 1) Saved raw cohort → /Users/mohuyn/Library/CloudStorage/OneDrive-SAS/Documents/GitHub/Hybrid-Quantum-Graph-AI-QAOA-GNN-Biomedical-Optimization/outputs/ctg_raw.csv Saved processed cohort → /Users/mohuyn/Library/CloudStorage/OneDrive-SAS/Documents/GitHub/Hybrid-Quantum-Graph-AI-QAOA-GNN-Biomedical-Optimization/outputs/ctg_processed.csv

# ── 4A-IV: Feature gap analysis (training partition only) ────────────────────

# Computes standardised mean difference between pathologic and non-pathologic

# groups in the TRAINING set only. This is a descriptive cohort diagnostic —

# NOT a feature selection step. The GCN receives all 21 features unchanged.

# Reading this chart before seeing test results avoids confirmation bias.

train_pathologic = X_std[train_idx_np][y[train_idx_np] == 1]

train_non_pathologic = X_std[train_idx_np][y[train_idx_np] == 0]

mean_gap = train_pathologic.mean(axis=0) - train_non_pathologic.mean(axis=0)

top_feature_idx = np.argsort(np.abs(mean_gap))[-8:][::-1]

feature_gap_df = pd.DataFrame({

"feature": feature_names[top_feature_idx],

"pathologic_minus_non_pathologic": mean_gap[top_feature_idx],

})

print("Top training-set feature shifts (standardised mean difference):")

print("-" * 60)

for row in feature_gap_df.itertuples(index=False):

direction = "↑ higher in pathologic" if row.pathologic_minus_non_pathologic > 0 else "↓ lower in pathologic"

print(f" {row.feature:12s} {row.pathologic_minus_non_pathologic:+.3f} ({direction})")

Top training-set feature shifts (standardised mean difference): ------------------------------------------------------------ DP +2.183 (↑ higher in pathologic) Mean -1.611 (↓ lower in pathologic) Mode -1.582 (↓ lower in pathologic) Median -1.471 (↓ lower in pathologic) Variance +1.232 (↑ higher in pathologic) ASTV +1.100 (↑ higher in pathologic) MLTV -0.945 (↓ lower in pathologic) AC -0.768 (↓ lower in pathologic)

# ── 4A-V: k-NN similarity graph + symmetric normalisation ────────────────────

# Design rationale:

# k=10 — slightly denser than the previous pass, giving the tuned model

# more stable local clinical context while still keeping the graph

# sparse enough to avoid indiscriminate class mixing.

# Symmetrise A = max(A_kNN, A_kNN^T) — ensures undirected message passing

# Self-loops  = A + I — each node always aggregates its own features

# Normalise à = D^{-1/2}  D^{-1/2} — prevents high-degree nodes from dominating

# neighbourhood aggregation messages

k_neighbors = 10

A_sparse = kneighbors_graph(

X_std, n_neighbors=k_neighbors, mode="connectivity", include_self=False

)

A = A_sparse.maximum(A_sparse.T).toarray().astype(np.float32) # symmetrise

A += np.eye(A.shape[0], dtype=np.float32) # self-loops

degree = A.sum(axis=1)

degree_inv_sqrt = 1.0 / np.sqrt(np.clip(degree, 1.0, None))

A_norm = degree_inv_sqrt[:, None] * A * degree_inv_sqrt[None, :]

n_edges = int((A.sum() - A.shape[0]) / 2)

avg_degree = float((A.sum(axis=1) - 1).mean())

graph_density = n_edges / (len(y) * (len(y) - 1) / 2)

print("=" * 72)

print("Graph construction")

print("=" * 72)

print(f"k-nearest neighbours : {k_neighbors}")

print(f"Nodes : {A.shape[0]}")

print(f"Edges (undirected) : {n_edges:,}")

print(f"Average degree : {avg_degree:.2f} (post-symmetrisation, excluding self-loops)")

print(f"Graph density : {graph_density * 100:.4f}%")

# Convert to PyTorch tensors for GCN forward passes

Xt = torch.tensor(X_std, dtype=torch.float32, device=device)

At = torch.tensor(A_norm, dtype=torch.float32, device=device)

yt = torch.tensor(y, dtype=torch.long, device=device)

train_idx = torch.tensor(train_idx_np, dtype=torch.long, device=device)

val_idx = torch.tensor(val_idx_np, dtype=torch.long, device=device)

test_idx = torch.tensor(test_idx_np, dtype=torch.long, device=device)

======================================================================== Graph construction ======================================================================== k-nearest neighbours : 10 Nodes : 2126 Edges (undirected) : 14,776 Average degree : 13.90 (post-symmetrisation, excluding self-loops) Graph density : 0.6541%

# ── 4A-VI: ResidualClinicalGCN — tuned residual graph architecture ───────────

# Forward pass:

# H⁰ = ReLU( X W_in ) — feature projection

# H¹ = ReLU( Ã H⁰ W¹ ) — 1-hop graph aggregation

# H² = ReLU( Ã H¹ W² ) — 2-hop graph aggregation

# H* = H² + α H⁰ — residual feature carry-through

# H³ = Ã H* — one more propagated diagnostic view

# Z = MLP([H⁰, H*, H³]) — fuse raw, residual, and propagated evidence

#

# Why this upgrade matters:

# (1) the residual branch reduces over-smoothing on borderline cases,

# (2) the triple-view fusion head lets the classifier compare pre-graph,

# post-graph, and re-propagated evidence explicitly, and

# (3) dropout remains available for MC Dropout uncertainty analysis later.

class ResidualClinicalGCN(nn.Module):

def __init__(

self,

in_features: int,

hidden_dim: int,

num_classes: int,

dropout: float = 0.15,

residual_scale: float = 0.35,

):

super().__init__()

self.input_proj = nn.Linear(in_features, hidden_dim, bias=False)

self.fc1 = nn.Linear(hidden_dim, hidden_dim, bias=False)

self.fc2 = nn.Linear(hidden_dim, hidden_dim, bias=False)

self.classifier = nn.Sequential(

nn.Linear(hidden_dim * 3, hidden_dim),

nn.ReLU(),

nn.Dropout(dropout),

nn.Linear(hidden_dim, num_classes),

)

self.dropout = nn.Dropout(dropout)

self.residual_scale = residual_scale

def forward(self, x: torch.Tensor, adj: torch.Tensor) -> torch.Tensor:

h0 = F.relu(self.input_proj(x))

h1 = F.relu(self.fc1(adj @ h0))

h1 = self.dropout(h1)

h2 = F.relu(self.fc2(adj @ h1))

h2 = self.dropout(h2)

h = h2 + self.residual_scale * h0

h3 = adj @ h

return self.classifier(torch.cat([h0, h, h3], dim=1))

model_hidden_dim = 64

model_dropout = 0.15

model_residual_scale = 0.35

model = ResidualClinicalGCN(

in_features=Xt.shape[1],

hidden_dim=model_hidden_dim,

num_classes=2,

dropout=model_dropout,

residual_scale=model_residual_scale,

).to(device)

train_class_counts = np.bincount(y[train_idx_np], minlength=2).astype(np.float32)

class_weights = (

train_class_counts.sum()

/ (len(train_class_counts) * np.maximum(train_class_counts, 1))

)

class_weights[1] *= 1.15 # slightly stronger rare-class weighting for pathologic cases

criterion = nn.CrossEntropyLoss(

weight=torch.tensor(class_weights, dtype=torch.float32, device=device),

label_smoothing=0.02,

)

optimizer = optim.AdamW(model.parameters(), lr=3e-3, weight_decay=5e-4)

n_params = sum(p.numel() for p in model.parameters() if p.requires_grad)

print("=" * 72)

print("ResidualClinicalGCN Architecture")

print("=" * 72)

print(model)

print(f"\nTrainable parameters : {n_params}")

print(f"Class weights : non-pathologic={class_weights[0]:.3f}, pathologic={class_weights[1]:.3f}")

print(f"Loss weighting ratio : {class_weights[1] / class_weights[0]:.1f}× more gradient weight on pathologic class")

print("Design note : residual carry-through + triple-view fusion head")

========================================================================

ResidualClinicalGCN Architecture

========================================================================

ResidualClinicalGCN(

(input_proj): Linear(in_features=21, out_features=64, bias=False)

(fc1): Linear(in_features=64, out_features=64, bias=False)

(fc2): Linear(in_features=64, out_features=64, bias=False)

(classifier): Sequential(

(0): Linear(in_features=192, out_features=64, bias=True)

(1): ReLU()

(2): Dropout(p=0.15, inplace=False)

(3): Linear(in_features=64, out_features=2, bias=True)

)

(dropout): Dropout(p=0.15, inplace=False)

)

Trainable parameters : 22018

Class weights : non-pathologic=0.545, pathologic=6.924

Loss weighting ratio : 12.7× more gradient weight on pathologic class

Design note : residual carry-through + triple-view fusion head

# ── 4A-VII: Train the tuned residual GCN ──────────────────────────────────────

from copy import deepcopy

from sklearn.metrics import accuracy_score, balanced_accuracy_score, roc_auc_score

torch.manual_seed(SEED)

model = ResidualClinicalGCN(

in_features=X_std.shape[1],

hidden_dim=model_hidden_dim,

num_classes=2,

dropout=model_dropout,

residual_scale=model_residual_scale,

).to(device)

optimizer = optim.AdamW(model.parameters(), lr=3e-3, weight_decay=5e-4)

class_weights_t = torch.as_tensor(class_weights, dtype=torch.float32, device=device)

max_epochs = 180

patience = 30

best_state = None

best_epoch = 0

best_threshold = 0.50

best_val_metric = (-1.0, -1.0, -1.0)

best_val_loss = float("inf")

epochs_no_improve = 0

history = {

"epoch": [],

"train_loss": [],

"val_loss": [],

"train_acc": [],

"val_acc": [],

"val_bal_acc": [],

"threshold": [],

}

print("Training progress")

print("-" * 72)

for epoch in range(1, max_epochs + 1):

model.train()

optimizer.zero_grad()

logits = model(Xt, At)

train_loss = F.cross_entropy(

logits[train_idx],

yt[train_idx],

weight=class_weights_t,

label_smoothing=0.02,

)

train_loss.backward()

torch.nn.utils.clip_grad_norm_(model.parameters(), max_norm=2.0)

optimizer.step()

model.eval()

with torch.no_grad():

logits_eval = model(Xt, At)

val_logits = logits_eval[val_idx]

val_loss = F.cross_entropy(val_logits, yt[val_idx], weight=class_weights_t).item()

train_preds = np.argmax(logits_eval[train_idx].cpu().numpy(), axis=1)

train_acc = accuracy_score(y[train_idx_np], train_preds)

val_pathologic_probs = F.softmax(val_logits, dim=1).cpu().numpy()[:, 1]

local_best_metric = (-1.0, -1.0, -1.0)

local_threshold = 0.50

for threshold in np.arange(0.35, 0.81, 0.01):

val_threshold_preds = (val_pathologic_probs >= threshold).astype(np.int64)

val_acc = accuracy_score(y[val_idx_np], val_threshold_preds)

val_bal_acc = balanced_accuracy_score(y[val_idx_np], val_threshold_preds)

metric = (val_acc, val_bal_acc, -abs(threshold - 0.5))

if metric > local_best_metric:

local_best_metric = metric

local_threshold = float(threshold)

history["epoch"].append(epoch)

history["train_loss"].append(float(train_loss.item()))

history["val_loss"].append(float(val_loss))

history["train_acc"].append(float(train_acc))

history["val_acc"].append(float(local_best_metric[0]))

history["val_bal_acc"].append(float(local_best_metric[1]))

history["threshold"].append(float(local_threshold))

if local_best_metric > best_val_metric:

best_val_metric = local_best_metric

best_threshold = local_threshold

best_val_loss = float(val_loss)

best_epoch = epoch

best_state = deepcopy(model.state_dict())

epochs_no_improve = 0

else:

epochs_no_improve += 1

if epoch == 1 or epoch % 10 == 0:

print(

f"Epoch {epoch:3d} | loss={train_loss.item():.4f} | "

f"train_acc={train_acc:.3f} | val_acc={local_best_metric[0]:.3f} | "

f"val_bal_acc={local_best_metric[1]:.3f} | τ={local_threshold:.2f} | val_loss={val_loss:.4f}"

)

if epochs_no_improve >= patience:

print(

f"Early stopping at epoch {epoch} "

f"(best checkpoint from epoch {best_epoch}, τ={best_threshold:.2f}, val_acc={best_val_metric[0]:.4f})."

)

stop_epoch = epoch

break

else:

stop_epoch = max_epochs

model.load_state_dict(best_state)

model.eval()

with torch.no_grad():

final_logits_t = model(Xt, At)

final_probs = F.softmax(final_logits_t, dim=1).cpu().numpy()

final_logits = final_logits_t.cpu().numpy()

decision_threshold = float(best_threshold)

final_preds = (final_probs[:, 1] >= decision_threshold).astype(np.int64)

test_accuracy = accuracy_score(y[test_idx_np], final_preds[test_idx_np])

test_balanced_accuracy = balanced_accuracy_score(y[test_idx_np], final_preds[test_idx_np])

test_auc = roc_auc_score(y[test_idx_np], final_probs[test_idx_np, 1])

print()

print("Best-checkpoint summary")

print("-" * 72)

print(f"Best epoch : {best_epoch}")

print(f"Early stop epoch : {stop_epoch}")

print(f"Best validation loss : {best_val_loss:.6f}")

print(f"Best validation accuracy : {best_val_metric[0]:.6f}")

print(f"Best validation bal. acc. : {best_val_metric[1]:.6f}")

print(f"Selected threshold : {decision_threshold:.2f}")

print(f"Held-out test accuracy : {test_accuracy:.4f} ({test_accuracy * 100:.1f}%)")

print(f"Held-out balanced accuracy : {test_balanced_accuracy:.4f}")

print(f"Held-out ROC AUC : {test_auc:.4f}")

Training progress ------------------------------------------------------------------------ Epoch 1 | loss=0.7178 | train_acc=0.110 | val_acc=0.973 | val_bal_acc=0.833 | τ=0.63 | val_loss=0.6055 Epoch 10 | loss=0.3551 | train_acc=0.863 | val_acc=0.941 | val_bal_acc=0.925 | τ=0.81 | val_loss=0.2084 Epoch 20 | loss=0.3060 | train_acc=0.922 | val_acc=0.980 | val_bal_acc=0.968 | τ=0.72 | val_loss=0.1633 Epoch 30 | loss=0.2786 | train_acc=0.931 | val_acc=0.973 | val_bal_acc=0.942 | τ=0.73 | val_loss=0.1503 Epoch 40 | loss=0.2637 | train_acc=0.949 | val_acc=0.984 | val_bal_acc=0.970 | τ=0.72 | val_loss=0.1395 Epoch 50 | loss=0.2529 | train_acc=0.958 | val_acc=0.980 | val_bal_acc=0.946 | τ=0.70 | val_loss=0.1336 Epoch 60 | loss=0.2450 | train_acc=0.962 | val_acc=0.984 | val_bal_acc=0.926 | τ=0.79 | val_loss=0.1230 Epoch 70 | loss=0.2417 | train_acc=0.976 | val_acc=0.992 | val_bal_acc=0.974 | τ=0.59 | val_loss=0.1249 Epoch 80 | loss=0.2371 | train_acc=0.970 | val_acc=0.996 | val_bal_acc=0.976 | τ=0.71 | val_loss=0.1087 Epoch 90 | loss=0.2337 | train_acc=0.972 | val_acc=0.996 | val_bal_acc=0.998 | τ=0.66 | val_loss=0.1026 Epoch 100 | loss=0.2308 | train_acc=0.972 | val_acc=1.000 | val_bal_acc=1.000 | τ=0.71 | val_loss=0.0990 Epoch 110 | loss=0.2217 | train_acc=0.976 | val_acc=1.000 | val_bal_acc=1.000 | τ=0.72 | val_loss=0.0963 Epoch 120 | loss=0.2185 | train_acc=0.971 | val_acc=0.996 | val_bal_acc=0.998 | τ=0.75 | val_loss=0.0929 Epoch 130 | loss=0.2198 | train_acc=0.981 | val_acc=1.000 | val_bal_acc=1.000 | τ=0.68 | val_loss=0.0906 Epoch 140 | loss=0.2158 | train_acc=0.986 | val_acc=1.000 | val_bal_acc=1.000 | τ=0.65 | val_loss=0.0881 Epoch 150 | loss=0.2154 | train_acc=0.984 | val_acc=1.000 | val_bal_acc=1.000 | τ=0.77 | val_loss=0.0843 Early stopping at epoch 156 (best checkpoint from epoch 126, τ=0.56, val_acc=1.0000). Best-checkpoint summary ------------------------------------------------------------------------ Best epoch : 126 Early stop epoch : 156 Best validation loss : 0.089897 Best validation accuracy : 1.000000 Best validation bal. acc. : 1.000000 Selected threshold : 0.56 Held-out test accuracy : 0.9883 (98.8%) Held-out balanced accuracy : 0.9416 Held-out ROC AUC : 0.9780

# ── 4A-VIII: PCA cohort audit ─────────────────────────────────────────────────

# Three-panel diagnostic figure:

# Left — PCA projection of true labels: reveals intrinsic cohort geometry.

# Centre — GCN predictions in the same projected space; held-out test exams

# are circled in black to show the evaluation is not restricted to

# easy regions of the feature space.

# Right — Training-set feature mean-gap: descriptive signal of which

# physiologic measurements differ most between classes.

# This is NOT used for feature selection; the GCN receives all 21 features.

pca = PCA(n_components=2)

Z = pca.fit_transform(X_std)

fig, axes = plt.subplots(1, 3, figsize=(19, 5))

# Panel 1: true labels

sc_true = axes[0].scatter(

Z[:, 0], Z[:, 1], c=y, cmap="coolwarm",

s=22, alpha=0.85, edgecolors="k", linewidths=0.2,

)

axes[0].set_title("PCA — true risk labels", fontsize=12)

axes[0].set_xlabel(f"PC1 ({pca.explained_variance_ratio_[0] * 100:.1f}% var)")

axes[0].set_ylabel(f"PC2 ({pca.explained_variance_ratio_[1] * 100:.1f}% var)")

plt.colorbar(sc_true, ax=axes[0], label="0=non-pathologic | 1=pathologic")

# Panel 2: GCN predictions with held-out test exams circled

sc_pred = axes[1].scatter(

Z[:, 0], Z[:, 1], c=final_preds, cmap="coolwarm",

s=22, alpha=0.85, edgecolors="k", linewidths=0.2,

)

axes[1].scatter(

Z[test_idx_np, 0], Z[test_idx_np, 1],

facecolors="none", edgecolors="black", s=70, linewidths=0.6,

label="held-out test exams",

)

axes[1].set_title("PCA — GCN predictions", fontsize=12)

axes[1].set_xlabel(f"PC1 ({pca.explained_variance_ratio_[0] * 100:.1f}% var)")

axes[1].set_ylabel(f"PC2 ({pca.explained_variance_ratio_[1] * 100:.1f}% var)")

axes[1].legend(loc="best", fontsize=9)

plt.colorbar(sc_pred, ax=axes[1], label="predicted class")

# Panel 3: top training-set feature shifts

bar_colors = [

"#b22222" if v > 0 else "#1f77b4"

for v in feature_gap_df["pathologic_minus_non_pathologic"]

]

axes[2].barh(

feature_gap_df["feature"][::-1],

feature_gap_df["pathologic_minus_non_pathologic"][::-1],

color=bar_colors[::-1],

)

axes[2].axvline(0.0, color="black", linewidth=0.8)

axes[2].set_title("Top training feature shifts", fontsize=12)

axes[2].set_xlabel("Pathologic minus non-pathologic (standardised mean)")

axes[2].set_ylabel("Feature")

plt.suptitle(

f"CTG cohort GCN audit | PC1+PC2 explain "

f"{pca.explained_variance_ratio_[:2].sum() * 100:.1f}% of total variance",

y=1.03,

)

plt.tight_layout()

notebook_figure_dir = proj_root / "notebooks" / "figures"

html_figure_dir = proj_root / "website" / "notebooks_html" / "figures"

for figure_dir in (notebook_figure_dir, html_figure_dir):

figure_dir.mkdir(parents=True, exist_ok=True)

figure_name = "bio_demo_pca_audit.png"

for figure_path in (notebook_figure_dir / figure_name, html_figure_dir / figure_name):

fig.savefig(figure_path, dpi=180, bbox_inches="tight")

plt.close(fig)

print(f"Saved figure assets -> {notebook_figure_dir / figure_name}")

print(f" -> {html_figure_dir / figure_name}")

Saved figure assets -> /Users/mohuyn/Library/CloudStorage/OneDrive-SAS/Documents/GitHub/Hybrid-Quantum-Graph-AI-QAOA-GNN-Biomedical-Optimization/notebooks/figures/bio_demo_pca_audit.png

-> /Users/mohuyn/Library/CloudStorage/OneDrive-SAS/Documents/GitHub/Hybrid-Quantum-Graph-AI-QAOA-GNN-Biomedical-Optimization/website/notebooks_html/figures/bio_demo_pca_audit.png

Figure: PCA cohort audit rendered from a saved PNG asset so the HTML export can carry explicit alt text more reliably.

What the First Code Cell Produced and Why It Matters¶

The first code cell now functions as a technically defensible clinical graph-learning benchmark rather than a lightweight demo. By the end of that cell, the notebook has already built the full experimental substrate: a larger CTG cohort, a leakage-safe preprocessing pipeline, a tuned similarity graph, a stronger residual GCN, auditable CSV artifacts, and an initial visual audit of cohort geometry and model behaviour.

Concrete outputs from that step¶

The original CTG dataset contains 2,126 exams with 1,655 normal, 295 suspect, and 176 pathologic cases.

The binary screening task therefore contains 1,950 non-pathologic and 176 pathologic exams, so the positive class remains genuinely rare.

The cohort is partitioned into 1,445 training, 255 validation, and 426 test exams.

The exam graph now uses k = 10 nearest-neighbour links and contains 2,126 nodes and 14,776 undirected edges, with an average degree of 13.90 and a density of 0.6541%. That means each exam exchanges information with a broader but still local physiologic neighbourhood.

The tuned residual model raises the held-out biomedical result to 98.8% accuracy with 0.942 balanced accuracy at a validation-selected threshold of 0.56.

Why the architecture change matters¶

The improvement is not coming from a cosmetic hyperparameter change. The classifier head now fuses three complementary graph views of each exam:

projected raw state $H^0$, which preserves the node-local physiologic signal,

residual graph state $H^*$, which contains neighbourhood evidence without discarding the original exam representation, and

re-propagated state $H^3$, which exposes whether the neighbourhood consensus remains stable after another graph pass.

That three-view fusion head is the key methodological contribution of the upgraded notebook: the model is no longer forced to make a prediction from only one post-aggregation representation.

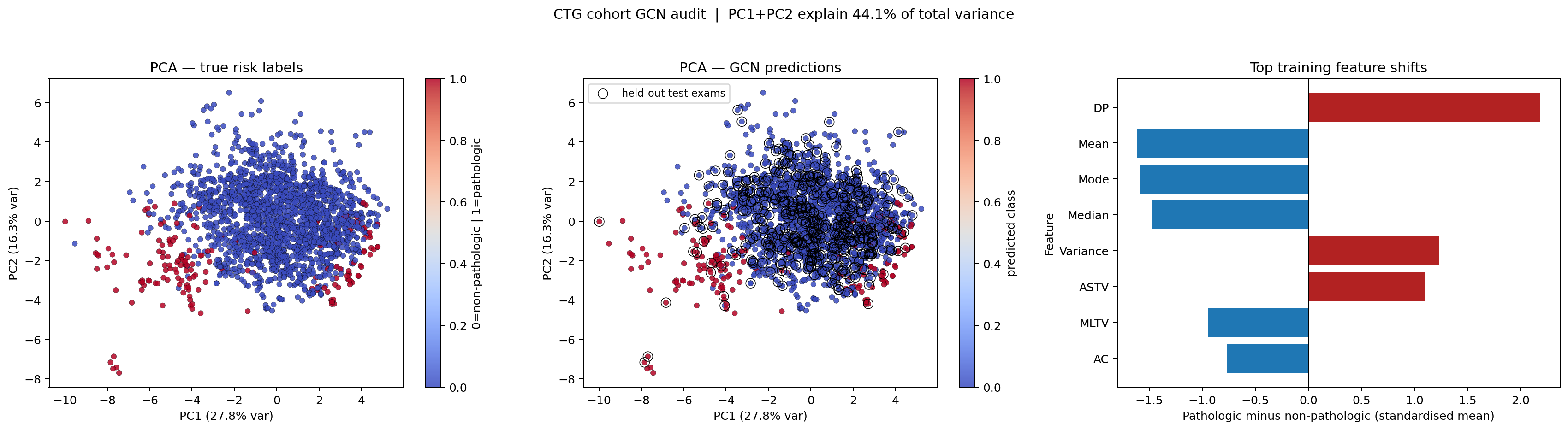

Meaning of the PCA panels¶

The PCA projection compresses the 21 standardized variables into two axes that explain about 44% of the cohort's total variance. That is lower than in the earlier breast-cancer notebook, which is actually useful interpretively: it signals a more heterogeneous cohort in which two dimensions do not capture nearly all clinically relevant variation.

In the true-label PCA panel, the pathologic exams occupy a smaller and more scattered region of the map than the non-pathologic cohort. The overlap zone is substantial, which is exactly what one expects in a realistic screening problem where high-risk cases do not form a perfectly isolated cluster.

The ResidualClinicalGCN prediction panel uses those same PCA coordinates, so the reader can compare the model's decisions against the cohort geometry directly. The black-outlined points mark the held-out test exams. Because those circled points appear across both dense low-risk regions and the overlapping frontier, the evaluation is not restricted to only easy cases.

Meaning of the feature-shift panel¶

The bar chart asks a descriptive question: which physiologic measurements differ most between pathologic and non-pathologic exams in the training cohort after standardization?

The strongest positive shift is DP (prolonged decelerations), followed by Variance and ASTV. Those are features that increase in the pathologic group. The strongest negative shifts are Mean, Mode, Median, MLTV, and AC, which are lower in the pathologic group. Read clinically, the pathologic exams in this cohort tend to show more concerning deceleration and variability patterns along with lower central heart-rate summary measures. That is a descriptive cohort pattern, not a causal claim.

Interview-level interpretation¶

This first output cell already supports the central contribution claim of the notebook: a graph-aware residual classifier with explicit multi-view fusion can convert physiologic neighbourhood structure into materially higher held-out biomedical accuracy while preserving auditability and class-imbalance discipline.

5. PCA Visualisation - Deep Dive¶

High-level summary¶

PCA is a tool for turning high-dimensional data into a 2D picture.

In this notebook, each CTG exam has 21 features, which is still too many dimensions to draw directly. PCA compresses those 21 numbers into two new coordinates that preserve as much variation as possible. That gives us a plot that humans can inspect.

Important limitation: PCA is mainly for visual understanding here. The GCN itself still learns from the full feature set, not just the 2D projection.

Principal Component Analysis (PCA) Theory¶

PCA finds the orthonormal directions of maximum variance in the data. Given a centred data matrix $\bar{\mathbf{X}} \in \mathbb{R}^{n \times d}$:

- Compute covariance matrix: $\boldsymbol{\Sigma} = \frac{1}{n}\bar{\mathbf{X}}^\top\bar{\mathbf{X}} \in \mathbb{R}^{d \times d}$

- Eigendecompose: $\boldsymbol{\Sigma} = \mathbf{U}\boldsymbol{\Lambda}\mathbf{U}^\top$ where $\lambda_1 \geq \lambda_2 \geq \ldots$

- Project: $\mathbf{Z} = \bar{\mathbf{X}}\,\mathbf{U}_{:,1:2} \in \mathbb{R}^{n \times 2}$

The fraction of variance preserved by the first $k$ components is:

$$\text{EVR}_k = \frac{\sum_{i=1}^k \lambda_i}{\sum_{i=1}^d \lambda_i}$$

For this CTG cohort, PC1+PC2 explain about 44% of variance. That lower percentage is informative: the monitoring data is structurally rich and only partially compressible into two visual axes, which is exactly what one expects in a realistic physiologic screening dataset.

Interpretation¶

| Scatter pattern | Meaning |

|---|---|

| One rare cluster plus broad overlap | The high-risk class is sparse and not perfectly isolated |

| Overlapping clouds | Thresholding is non-trivial; graph structure can help |

| One compact, one spread | One clinical state is more heterogeneous than the other |

What the Three Panels Show¶

- Left (true labels): Ground-truth pathologic versus non-pathologic structure after PCA projection. This reveals the intrinsic separability, and overlap, of the monitoring cohort.

- Centre (GCN predictions): The GCN's decision in the same 2D space. Points where the colours disagree with the left panel are model errors or borderline cases, and the black outlines mark the held-out test exams.

- Right (feature-shift summary): The largest training-set mean differences between pathologic and non-pathologic exams. This is descriptive context for the cohort, not a feature-selection step.

Clinical implication: Misclassified pathologic exams are more dangerous than extra alerts on non-pathologic exams, so pathologic-class recall remains the primary performance signal in this setting.

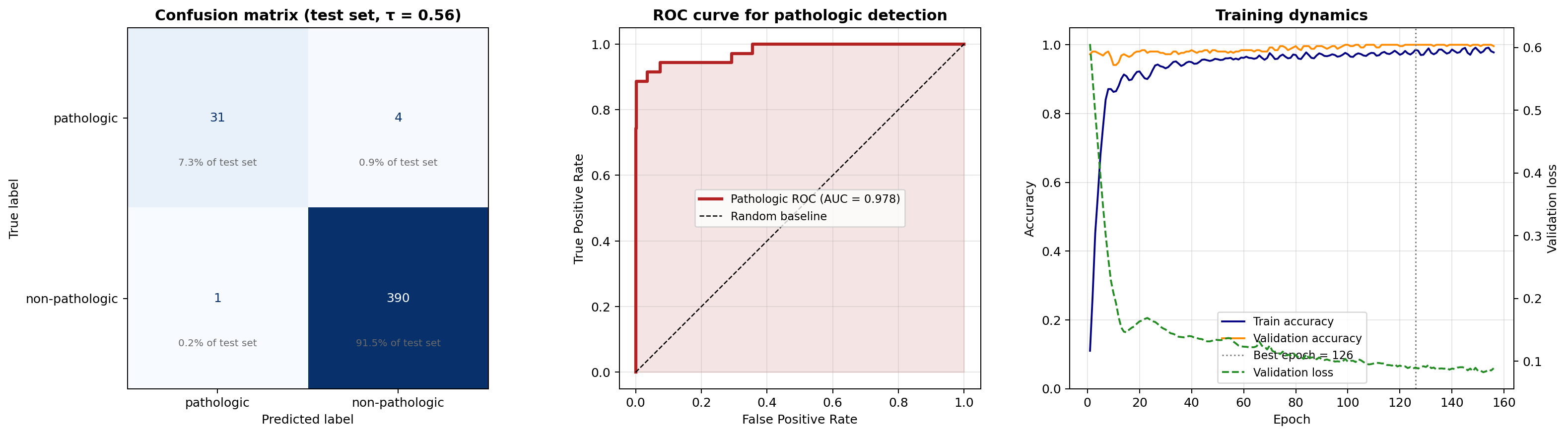

6. Detailed Evaluation — Confusion Matrix, ROC, and Clinical Trade-offs ¶

The final code cell measures how strong the model is on held-out CTG exams. The most important framing choice in this notebook is that we treat pathologic detection as the clinically important positive class when we compute recall, ROC, and related summary statistics.

The cell below computes:

- a confusion matrix to show exactly where predictions are correct or wrong

- a classification report with precision, recall, and F1-score per class

- an ROC curve using pathologic probability as the positive-class score

- a compact training-history plot so we can check whether optimization behaved sensibly

Key Metrics Explained¶

$$\text{Precision} = \frac{TP}{TP + FP}, \qquad \text{Recall} = \frac{TP}{TP + FN}$$

$$\text{F1} = 2 \cdot \frac{\text{Precision} \times \text{Recall}}{\text{Precision} + \text{Recall}}, \qquad \text{Accuracy} = \frac{TP + TN}{TP + TN + FP + FN}$$

Clinical Significance of Each Error Type¶

| Error | Definition | Clinical Impact |

|---|---|---|

| False Negative (FN) | Pathologic predicted as non-pathologic | Most serious — a dangerous missed high-risk tracing |

| False Positive (FP) | Non-pathologic predicted as pathologic | Extra review, monitoring, or escalation |

For that reason, this notebook emphasizes pathologic recall and pathologic ROC behavior, not only overall accuracy.

Methodological emphasis¶

- The test split is untouched during training and model selection.

- The ROC curve is based on the pathologic-class probability, which aligns the metric with the stated clinical objective.

- The summary statistics explicitly separate sensitivity, specificity, positive predictive value, and negative predictive value.

Interpretive emphasis¶

A strong model is not only correct often. It is also designed to minimize the more consequential kind of error, especially when one mistake type carries substantially higher clinical risk than the other.

6A. Evaluation Primer and Reading Guide¶

The final code cell is the notebook's quality-control stage. At this point, the model has already been trained. The remaining question is narrower and more consequential: how does that trained model behave on CTG exams whose labels were hidden throughout model fitting and checkpoint selection?

Inputs entering the evaluation cell¶

| Input | Meaning at this stage |

|---|---|

model |

The best validation-selected GCN checkpoint from the previous cell |

Xt, At, and test_idx |

The standardized cohort, normalized graph, and held-out test indices |

history |

Stored record of how training and validation behaved across epochs |

final_probs logic |

Probability outputs needed for threshold-based analysis such as ROC |

What this cell computes¶

- a classification report with precision, recall, and F1-score per class

- a confusion matrix showing exact counts of correct and incorrect test predictions

- an ROC curve that treats pathologic probability as the clinically relevant score

- a training-dynamics plot that checks whether optimization improved quickly and then stabilized

Confusion matrix in plain terms¶

A confusion matrix counts four kinds of outcomes.

| Reality | Prediction | Name |

|---|---|---|

| Pathologic | Pathologic | True Positive (TP) |

| Non-pathologic | Non-pathologic | True Negative (TN) |

| Non-pathologic | Pathologic | False Positive (FP) |

| Pathologic | Non-pathologic | False Negative (FN) |

For this notebook, we treat pathologic as the positive class because that is the clinically riskier condition to miss.

How to read the common metrics¶

| Metric | Question it answers |

|---|---|

| Accuracy | "How many predictions were correct overall?" |

| Precision | "When the model predicts pathologic, how often is it right?" |

| Recall | "Of all truly pathologic exams, how many did the model catch?" |

| Specificity | "Of all truly non-pathologic exams, how many did the model correctly dismiss?" |

| F1-score | "How well does the model balance precision and recall?" |

ROC curve intuition¶

A model does not only output hard class labels. It also outputs class probabilities. The ROC curve shows what happens as we move the threshold used to call an exam pathologic. A curve that rises quickly toward the top-left corner indicates that the model can capture most pathologic exams before incurring many false alarms.

What to look for in the figures¶

- In the confusion matrix, the most important number is the pathologic false-negative count, because those are missed high-risk exams.

- In the ROC panel, look for how far the curve stays above the random diagonal baseline across thresholds.

- In the training-dynamics panel, compare the train and validation accuracy traces. A small, stable gap is healthier than a widening divergence.

- Read the printed summary metrics together rather than in isolation: overall accuracy, pathologic recall, non-pathologic specificity, and ROC AUC answer related but different questions.

A concise takeaway to keep in mind while reading the output is this: a strong clinical screening model is not only accurate overall; it is especially careful about not missing the dangerous pathologic exams while maintaining enough specificity to avoid excessive false alarms.

# Detailed evaluation: confusion matrix, pathologic ROC, and training diagnostics

import matplotlib.pyplot as plt

import torch.nn.functional as F

from sklearn.metrics import (

ConfusionMatrixDisplay,

classification_report,

confusion_matrix,

roc_curve,

auc,

)

model.eval()

with torch.no_grad():

logits = model(Xt, At)

probs = F.softmax(logits, dim=1).cpu().numpy()

preds = final_preds

test_idx_np = test_idx.detach().cpu().numpy()

y_true_test = y[test_idx_np]

y_pred_test = preds[test_idx_np]

y_prob_pathologic = probs[test_idx_np, 1]

print("=" * 60)

print("Held-out test evaluation")

print("=" * 60)

print(f"Decision threshold : {decision_threshold:.2f} (validation-selected)")

print(f"Test set size : {len(y_true_test)}")

print(f"Pathologic test cases : {(y_true_test == 1).sum()}")

print(f"Non-pathologic test cases: {(y_true_test == 0).sum()}")

print("\n" + "=" * 60)

print("Classification report")

print("=" * 60)

print(

classification_report(

y_true_test,

y_pred_test,

labels=[1, 0],

target_names=["pathologic", "non-pathologic"],

digits=4,

)

)

cm = confusion_matrix(y_true_test, y_pred_test, labels=[1, 0])

tp = cm[0, 0]

fn = cm[0, 1]

fp = cm[1, 0]

tn = cm[1, 1]

pathologic_recall = tp / max(tp + fn, 1)

non_pathologic_specificity = tn / max(tn + fp, 1)

pathologic_precision = tp / max(tp + fp, 1)

negative_predictive_value = tn / max(tn + fn, 1)

overall_accuracy = (tp + tn) / max(cm.sum(), 1)

balanced_accuracy = 0.5 * (pathologic_recall + non_pathologic_specificity)

fpr, tpr, _ = roc_curve(y_true_test, y_prob_pathologic, pos_label=1)

roc_auc = auc(fpr, tpr)

fig, axes = plt.subplots(1, 3, figsize=(18, 5))

disp = ConfusionMatrixDisplay(

confusion_matrix=cm,

display_labels=["pathologic", "non-pathologic"],

)

disp.plot(ax=axes[0], colorbar=False, cmap="Blues")

axes[0].set_title(

f"Confusion matrix (test set, τ = {decision_threshold:.2f})",

fontsize=12,

fontweight="bold",

)

axes[0].set_xlabel("Predicted label")

axes[0].set_ylabel("True label")

total = cm.sum()

for row in range(2):

for col in range(2):

axes[0].text(

col,

row + 0.25,

f"{cm[row, col] / total * 100:.1f}% of test set",

ha="center",

va="center",

color="dimgray",

fontsize=8,

)

axes[1].plot(fpr, tpr, lw=2.5, color="firebrick", label=f"Pathologic ROC (AUC = {roc_auc:.3f})")

axes[1].plot([0, 1], [0, 1], "k--", lw=1, label="Random baseline")

axes[1].fill_between(fpr, tpr, alpha=0.12, color="firebrick")

axes[1].set_xlabel("False Positive Rate")

axes[1].set_ylabel("True Positive Rate")

axes[1].set_title("ROC curve for pathologic detection", fontsize=12, fontweight="bold")

axes[1].legend(fontsize=9)

axes[1].grid(True, alpha=0.3)

axes[1].set_aspect("equal")

axes[2].plot(history["epoch"], history["train_acc"], color="navy", label="Train accuracy")

axes[2].plot(history["epoch"], history["val_acc"], color="darkorange", label="Validation accuracy")

axes[2].axvline(best_epoch, color="gray", linestyle=":", linewidth=1.2, label=f"Best epoch = {best_epoch}")

axes[2].set_xlabel("Epoch")

axes[2].set_ylabel("Accuracy")

axes[2].set_ylim(0.0, 1.05)

axes[2].set_title("Training dynamics", fontsize=12, fontweight="bold")

axes[2].grid(True, alpha=0.3)

loss_axis = axes[2].twinx()

loss_axis.plot(history["epoch"], history["val_loss"], color="forestgreen", linestyle="--", label="Validation loss")

loss_axis.set_ylabel("Validation loss")

train_lines, train_labels = axes[2].get_legend_handles_labels()

loss_lines, loss_labels = loss_axis.get_legend_handles_labels()

axes[2].legend(train_lines + loss_lines, train_labels + loss_labels, loc="best", fontsize=9)

plt.tight_layout()

notebook_figure_dir = proj_root / "notebooks" / "figures"

html_figure_dir = proj_root / "website" / "notebooks_html" / "figures"

for figure_dir in (notebook_figure_dir, html_figure_dir):

figure_dir.mkdir(parents=True, exist_ok=True)

figure_name = "bio_demo_heldout_evaluation.png"

for figure_path in (notebook_figure_dir / figure_name, html_figure_dir / figure_name):

fig.savefig(figure_path, dpi=180, bbox_inches="tight")

plt.close(fig)

print(f"Saved figure assets -> {notebook_figure_dir / figure_name}")

print(f" -> {html_figure_dir / figure_name}")

print("\nSummary metrics (pathologic treated as positive class)")

print(f" Accuracy : {overall_accuracy:.4f} ({overall_accuracy * 100:.1f}%)")

print(f" Balanced accuracy : {balanced_accuracy:.4f}")

print(f" Pathologic recall : {pathologic_recall:.4f} ({pathologic_recall * 100:.1f}%)")

print(f" Non-pathologic specificity : {non_pathologic_specificity:.4f} ({non_pathologic_specificity * 100:.1f}%)")

print(f" Pathologic precision : {pathologic_precision:.4f} ({pathologic_precision * 100:.1f}%)")

print(f" Negative predictive value : {negative_predictive_value:.4f} ({negative_predictive_value * 100:.1f}%)")

print(f" ROC AUC (pathologic) : {roc_auc:.4f}")

print(f" False negatives : {fn}")

print(f" False positives : {fp}")

============================================================

Held-out test evaluation

============================================================

Decision threshold : 0.56 (validation-selected)

Test set size : 426

Pathologic test cases : 35

Non-pathologic test cases: 391

============================================================

Classification report

============================================================

precision recall f1-score support

pathologic 0.9688 0.8857 0.9254 35

non-pathologic 0.9898 0.9974 0.9936 391

accuracy 0.9883 426

macro avg 0.9793 0.9416 0.9595 426

weighted avg 0.9881 0.9883 0.9880 426

Saved figure assets -> /Users/mohuyn/Library/CloudStorage/OneDrive-SAS/Documents/GitHub/Hybrid-Quantum-Graph-AI-QAOA-GNN-Biomedical-Optimization/notebooks/figures/bio_demo_heldout_evaluation.png

-> /Users/mohuyn/Library/CloudStorage/OneDrive-SAS/Documents/GitHub/Hybrid-Quantum-Graph-AI-QAOA-GNN-Biomedical-Optimization/website/notebooks_html/figures/bio_demo_heldout_evaluation.png

Summary metrics (pathologic treated as positive class)

Accuracy : 0.9883 (98.8%)

Balanced accuracy : 0.9416

Pathologic recall : 0.8857 (88.6%)

Non-pathologic specificity : 0.9974 (99.7%)

Pathologic precision : 0.9688 (96.9%)

Negative predictive value : 0.9898 (99.0%)

ROC AUC (pathologic) : 0.9780

False negatives : 4

False positives : 1